|

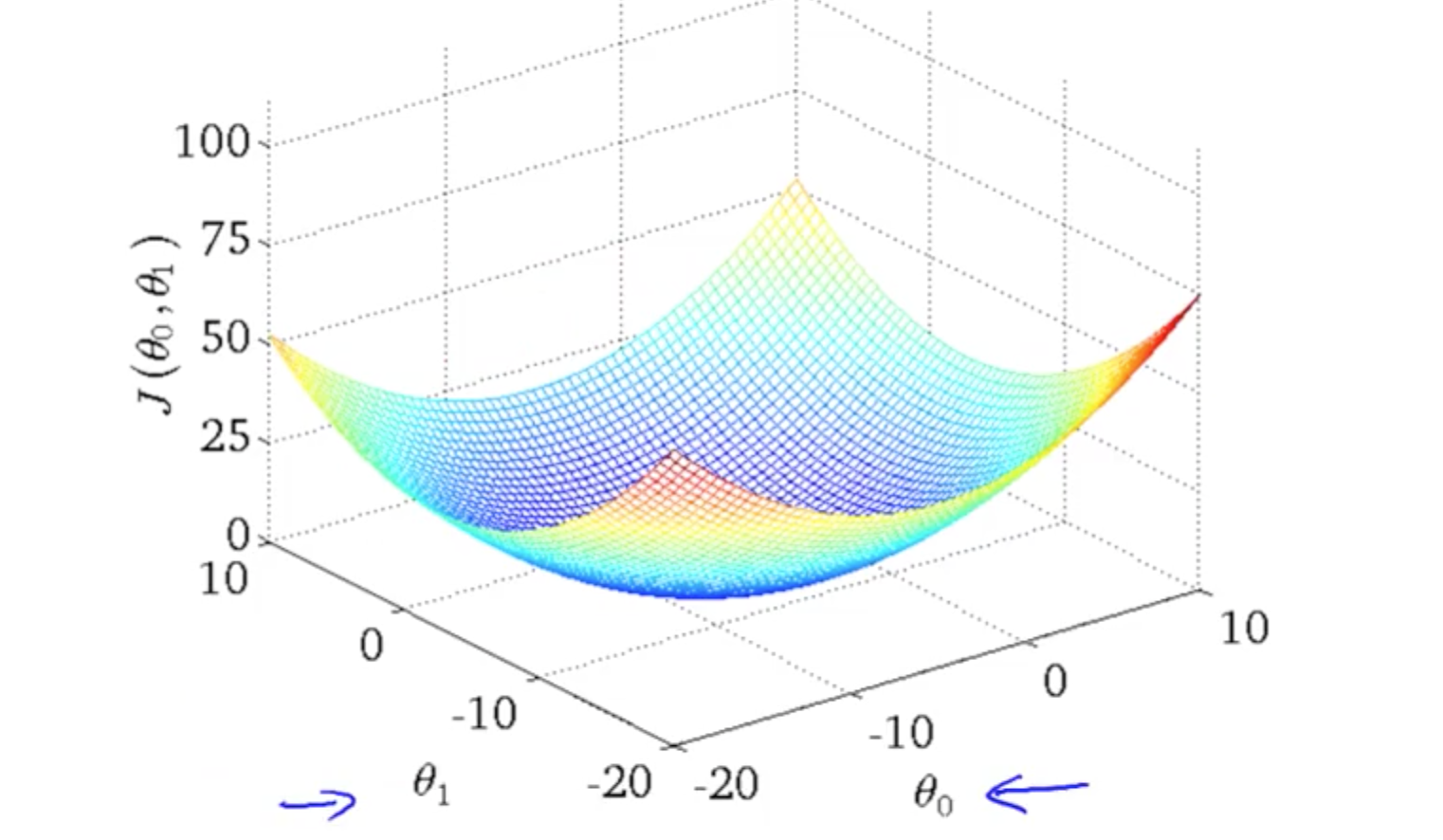

As we see, solving the normal equation has a very bad time complexity Of at most O ( n 2 m ) O(n^2m) O ( n 2 m ). The other operations in this equation are all matrix multiplications, which have a complexity M S E ( m, b ) = 1 7 ∑ i = 1 7 ( y i − ( m x i + b ) ) 2 This way, we don’t get theĬombined squared error, but instead we get the average squared error per point. Take the mean (or the average) of the SOSR instead of the SOSR. After we sum the squares of every residual, let’s alsoĭivide the final result by the number of data points in our dataset. Let’s therefor slightly modify our metric. Price of 7 houses, but what if we predicted 70.000 houses instead? Then this error suddenly doesn’t look that bad anymore. An error of 205.000$ is really bad if we only predicted the Now that’s prettyīad! But this number is a bit tricky to interpret. This means that our function made a total error of roughly 205.000$. If we did not have the SOSR-values for f f f and h h h, how could we tell ifĪ SOSR of 42200 is very good, decent, bad, or terrible? If we take the square root of 42200, I mean, it’s a good metric, but we can’t really interpret a So it looks like the function g g g best fits our data! Now I don’t know about you, but I’m a bit The large residual has a weight three times larger than the three smaller residuals,Įven though their total error is exactly the same! If we were to take the SOAR instead, However, the SOSR in the second case would be 3 0 2 = 900 30^2 = 900 3 0 2 = 9 0 0. The same as the error of the fourth residual. R 4 r_4 r 4 is exactly r 1 + r 2 + r 3 r_1+r_2+r_3 r 1 + r 2 + r 3 , so the total error of the first three residuals is exactly In the second scenario we only have one residual, r 4 = 30 r_4 = 30 r 4 = 3 0.This just means that we care more about large residuals than we do about small residuals. Squaring the residuals we magnify the effect large We use the SOSR to measure how well (or rather how poorly) a line fits our data. This can’t be a good thing, can it? Well, in our case it actually is! R 1 r_1 r 1 decreased, while r 2 r_2 r 2 increased 10-fold and r 3 r_3 r 3 increased 40-fold! If we have three residuals r 1 = 0.5 r_1 =0.5 r 1 = 0.

We wanted to calculate the sum of residuals,īut if we square each term, then large residuals increase in size a lot more than small residuals! If we compare the SOSR with the SOR, you might say: squaring the residuals yields a different result than the one we actually wanted, doesn’t it? Now we could try and correct our SOSR by taking the square root of every residual.īut the thing is, not “correcting” our SOSR might actually be beneficial. the derivative of x 2 x^2 x 2 is just 2 x 2x 2 x). Ourselves, we avoid using the SOAR and use the SOSR insteadīecause its derivative is very simple (f.e. So in order to make things a bit easier for We will take a look at these two techniques later on in the post.

Post, but they are needed for finding the normal equation or performing Since the SOAR tells us how badĪ function performs, we are interested in finding the lowest possible value of it,Īnd therefor we need the derivative of it. Need to take the derivative of our metric if we want to find it’s minimum, Why do we need the derivative of the SOAR? We

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed